Open Letter to Jan Molak

Jan,

Your recent updates to serenity-js.org are a significant improvement, and they highlight something I believe is increasingly important for our industry: testing strategy matters more than tooling.

We've exchanged a few DMs about the accessibility of Serenity/JS, particularly how difficult it can be for newcomers to understand where it fits into their testing journey. When you asked me to review the latest changes to the website, I realised my feedback was about more than the site itself, so I thought I'd share it in the form of an open letter.

Meeting People Where They Are

What stands out most about the updated site is how effectively it meets people where they are. Instead of assuming visitors already understand the Screenplay Pattern, it starts with the problems teams are trying to solve and then shows different paths into Serenity/JS.

Whether someone is looking for better reporting, more maintainable tests, or a full Screenplay-based architecture, they can quickly understand where Serenity/JS fits and how it might help.

That matters because most teams don't wake up looking for a new framework. They are looking for solutions to real problems. By focusing on those problems first, the site makes Serenity/JS far more approachable and relevant.

A Shift in the Industry

Reviewing the site also reminded me of a broader shift happening across our industry.

For years, conversations about test automation have centred on tools. Playwright versus Cypress. Selenium versus WebdriverIO. Page Objects versus Screenplay.

Today, AI has become part of that conversation as well.

And honestly, that's a good thing.

AI is already helping teams write tests faster, understand systems more quickly, improve documentation, and eliminate repetitive work. Used well, it can make automation engineers dramatically more productive.

Yet the rise of AI also reinforces an important truth: the value of testing has never been in writing code.

The real value lies in understanding risk, identifying meaningful behaviours, and deciding what is worth testing in the first place.

Those are fundamentally human skills.

The Impact of AI on Testing

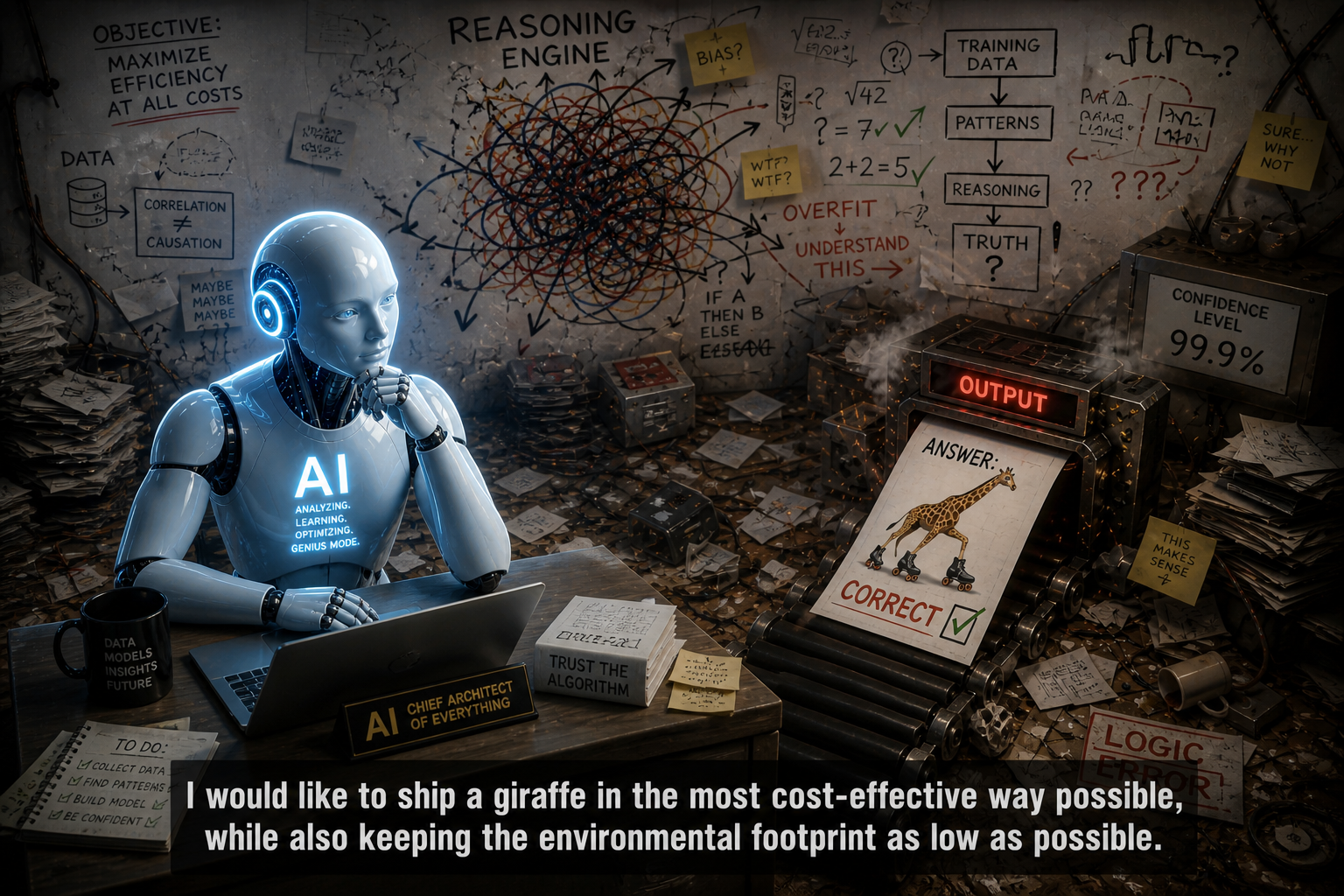

An AI can generate a Playwright test in seconds. It can even generate hundreds of them. But someone still needs to decide which behaviours matter, what level of confidence is required, and how testing supports the goals of the business.

As AI lowers the barrier to creating automation code, the differentiator will not be who can generate the most tests. It will be who can design the most effective testing strategy and communicate it clearly.

This is why I believe test strategy should always come before tooling.

Not because tools don't matter - they absolutely do.

But the best tools amplify a good strategy rather than compensate for the lack of one.

Why Serenity/JS Matters

This is where I think Serenity/JS becomes particularly relevant.

Its value isn't that it can automate a browser. Playwright already does that brilliantly.

Its value is that it encourages teams to think about behaviour, intent, and outcomes. It helps automation express what users are trying to achieve rather than simply documenting which buttons were clicked.

In an AI-assisted world, that emphasis on intent becomes even more important. When generating automation becomes easier, the quality of the thinking behind that automation becomes the real advantage.

What I appreciate about the updated Serenity/JS website is that it moves the conversation in exactly that direction. It explains not only what Serenity/JS is, but also the problems it helps teams solve and the thinking behind it.

The result is a much clearer path for newcomers and a stronger message for experienced practitioners.

Conclusion

In a world where AI is making test automation more accessible than ever, helping people think more clearly about testing may be one of the most valuable things a framework can do.

Congratulations on the work.

Best, Jan

Image by

Image by